Mentors & Projects

(Spring ‘26)

BASE Mentors are pushing AI Safety, Governance, & Security forward while investing in up and coming researchers, practitioners, and leaders.

Tobi Olaiya

Tobi Olaiya is a Senior Manager of Ethical Use Policy at Salesforce, where she leads the operationalization of the company's AI acceptable use policies. She specializes in AI governance, product safety, and cross-functional policy initiatives that balance innovation with ethical technology practices.

Prior to Salesforce, Tobi held Trust & Safety roles at Twitter, where she led the development of the platform's first recommendations explainer, advancing algorithmic transparency and user choice. She holds a Master of Public Policy from the University of Maryland.

Ethical Use & Policy, Salesforce

Alisar Mustafa

Head of AI Policy, DucoTrack: Policy & Governance

Alisar Mustafa is the Head of AI Policy at Duco, where she leads AI safety and governance initiatives for enterprise clients to ensure they operate safely, securely and responsibly. A strategic leader in AI governance, she specializes in model fine-tuning, adversarial testing, and regulatory compliance. She has held AI governance roles at Meta, the Federation of American Scientists, and the U.S. Census Bureau.

She also authors a widely read AI policy newsletter, providing insights on emerging regulations and industry developments. Her expertise spans AI risk mitigation, model evaluation, policy analysis and stakeholder engagement across enterprise and government sectors.

KRYstal Jackson

Non-Resident Fellow, Center for Long-Term Cybersecurity

Krystal Jackson is a Non-Resident Research Fellow at the Center for Long-Term Cybersecurity, AI Security Initiative, where she conducts research into the global security implications of artificial intelligence. Before this role, she worked as a Research Associate at the Frontier Model Forum, where she focused on advancing AI-cyber safety and AI security with industry leaders.

Krystal also previously served as an AI Capabilities Analyst at the Cybersecurity and Infrastructure Security Agency, driving critical AI initiatives within the Infrastructure Security Division. Krystal's research experience includes leadership with the Center for AI and Digital Policy Research Clinic, as a Junior AI Fellow at the Center for Security and Emerging Technology, and as a Public Interest Technology Fellow at the U.S. Census Bureau. She acts as the Research Director of BASE.

Track: Alignment & Security

Cozmin Ududec

Science of Evaluation Lead, UK AISI

Currently leading the Science of Evaluation team at the UK AI Security Institute. He was previously Chief Scientist at Invenia Labs , doing research on electricity grids and machine learning. My background is in mathematical physics, in particular in the foundations of quantum mechanics and in quantum information theory. He has broad research experience with conceptual, pure, and applied mathematical problems.

Track: Alignment

Track: Policy & Governance

Latisha Harry

Senior Fellow, Portulans Institute

Track: Policy & Governance

Latisha is an independent research and policy consultant specializing in AI governance, digital rights, and technology-driven institutional innovation. She designs and leads complex, multi-stakeholder research programs that bridge the worlds of policy, civil society, and emerging technology.

Her work spans AI risk assessment, data governance, misinformation analysis, and legislative mapping, and has worked with organisations such as the Effective Institutions Project, OpenAI, Stanford HAI, CIVICUS, Global Witness, and the International Labour Organization, among others. Latisha’s contributions range from red-teaming advanced AI systems to analysing global surveillance trends, evaluating government responses to AI risks, building cross-national policy datasets, and producing high-impact reports for policymakers.

Titi Akinsanmi

Global Policy Team Lead, Google

With over four years as Global Policy Team Lead at Google, I focus on shaping policies for the responsible use and access to generative AI products and hardware platforms. My team works to develop trustworthy technologies that respect individual and societal rights while addressing safety and ethical considerations in the digital space.

A dedicated advocate for responsible innovation, I bring extensive expertise in public access, government consultations, and engaging with officials to address critical issues in technology governance. My mission is to ensure that digital tools and policies empower and protect users worldwide, fostering a safer, more inclusive future in the evolving digital economy.

Track: Policy & Governance

COLIN SHEA BLYMYER

Research Fellow, CSET

Colin Shea-Blymyer is a Research Fellow at Georgetown’s Center for Security and Emerging Technology (CSET), where he works on the CyberAI Project. His research has spanned safe reinforcement learning, formal methods, adversarial machine learning, and AI ethics. Previously, he was a graduate researcher with MITRE where he helped establish the National Institute of Standards and Technology (NIST) program on adversarial machine learning research at the

National Cybersecurity Center of Excellence (NCCOE). He holds an MS and BS in Computer Science from Virginia Tech. He has a PhD in Computer Science and Artificial Intelligence from

Track: Alignment

Amari Cowan

Emerging Technology Fellow U.S. Census Bureau

Amari is an AI policy and governance professional working at the intersection of AI and public policy, translating technical safety, risk, and performance considerations into practical governance frameworks. Amari’s experience includes senior roles across Big Tech and the U.S. federal government, including serving as the first AI Officer at the Federal Energy Regulatory Commission and working on global technology governance initiatives at Meta and TikTok.

She currently serves as a Technologist-in-Residence, Emerging Technology Fellow at the U.S. Census Bureau, where she works on experimental policy frameworks and advises leadership across the federal landscape on ethical AI governance at scale.

Track: Governance

Gabrielle Hibbert

Non-Resident Fellow, New America

Gabrielle Hibbert is currently the AI Policy Lead for the Commonwealth of Pennsylvania, where she writes and develops governance solutions for the Commonwealth. With her experience designing policy that is user driven and backed by industry-leading research, Gabrielle has helped establish innovative and transparency policy with the needs of risk, security, and data privacy. In 2023, she was named a non-resident fellow at New America, where she developed and published a paper on user-informed nutrition labels for generative AI tools. Her work can be found at Rubrik, the Kapor Center, and the Bipartisan Policy Center, among other outlets.

She has served as a pro bono technical expert and Board Member of the Heller School for Social Policy's Tech Policy center since 2022.

Track: Policy & Governance

ELFREDAH KEVIN-ALERECHI

Chief Innovation Officer, Journotech

Elfredah Kevin-Alerechi is an AI innovator, journalist, researcher, and ethical technology leader based in the UK. She is the founder of NewsAssist AI and Journotech, where she also serves as Chief Innovation Officer, leading responsible AI innovation, policy development, and ethical deployment. Her work focuses on AI safety, ethics, governance, and security, with a strong emphasis on building inclusive, human centered AI systems that serve communities rather than marginalize them.

Her background spans AI product development, AI policy drafting, governance design, and research mentorship

-

Through Journotech, she has trained over 300 professionals across 21 countries and built a network of nearly 1,000 practitioners, including educators, researchers, journalists, newsrooms, and civil society organisations. She designs and delivers training on responsible AI use, AI governance frameworks, secure AI deployment, and ethical innovation. She has also spoken at international conferences on AI security, responsible usage, and ethical AI implementation.

JEff Fields

Track: Governance

Track: Security & Governance

Senior Fellow, Berkeley Institute for Security and Governance

Jeff Fields is a mission-driven leader with nearly two decades of experience at the intersection of national security, emerging technology, and innovation. Over a 20-year career in the intelligence community, he pioneered cyber operations platforms, led the FBI’s first enterprise program for securing AI, semiconductor, and biotechnology systems, and co-established a landmark AI research partnership with UC Berkeley. He now serves as a Senior Fellow of Practice at UC Berkeley’s Goldman School of Public Policy, where he teaches and researches the geostrategic implications of frontier technologies, including AI, space, and quantum systems. Jeff advises startups, institutions, and defense-focused organizations on navigating national security risks and regulatory challenges, helping translate complex policy constraints into strategic advantages.

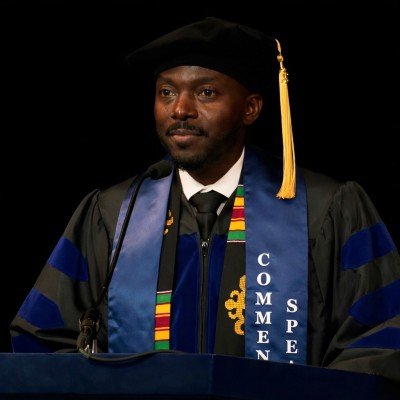

Dr. GAspard BAYe

Founder & CEO, Valix AI

Track: Security

Security AI Scientist and Ph.D. with 10+ years of experience building AI-driven offensive and defensive security solutions. I have 12+ publications in venues such as NeurIPS, HASP, and IEEE Access (140+ citations) and hold CVE recognition and multiple top cybersecurity certifications, including OSCP, PNPT, and CEH Practical. His work has been showcased at DEFCON, OWASP, BSides, and The Diana Initiative, with Hall of Fame honors from Nokia and Ford. He specializes in developing security AI algorithms, conducting penetration testing, and building intelligent threat detection systems. Currently, he founded and serves as CTO at Valix AI, where he leads the development of foundational AI security platforms that enable intelligent agents to detect, analyze, and neutralize both conventional and AI-powered threats.

Serena Oduro

Policy Manager, Data & Society Institute

Serena Oduro is an AI policy expert and writer driven by her dedication to realizing an AI ecosystem that truly benefits us all. As Data & Society Research Institute’s policy manager, Serena Oduro leads the organization’s state-level policy engagement. Before her work on state policy, Serena led Data & Society’s engagement as a founding member within the US AI Safety Institute Consortium, where she advocated for a sociotechnical approach to AI safety. She is a HUMAN Residency Fellow, awarded by Ragdale, Lake Forest College, and The Mellon Foundation in support of her developing poetry collection which centers a Black feminist analysis and approach to AI.

-

Serena Oduro is an AI policy expert and writer driven by her dedication to realizing an AI ecosystem that truly benefits us all. As Data & Society Research Institute’s policy manager, Serena Oduro leads the organization’s state-level policy engagement. Before her work on state policy, Serena led Data & Society’s engagement as a founding member within the US AI Safety Institute Consortium, where she advocated for a sociotechnical approach to AI safety. She is a HUMAN Residency Fellow, awarded by Ragdale, Lake Forest College, and The Mellon Foundation in support of her developing poetry collection which centers a Black feminist analysis and approach to AI. Her work has appeared in academic journals and news media, including Politico, Internet Policy Review, Meatspace Press, and Patterns. Previously, Serena was a technology equity fellow at The Greenlining Institute, where she provided key support for Greenlining’s sponsorship of the Automated Decision Systems Accountability Act of 2021.

Track: Alignment

HeramB Podar

Fellow, Center for AI and Digital Policy

Heramb Podar is an AI policy fellow at the Center for AI and Digital Policy and has previously been a GovAI winter fellow and did the ERA and FIG fellowships. Currently, he works with Encode on their International Task Force to coordinate global activity among chapters. Heramb holds Bachelor's and Master's degrees in chemistry from IIT Roorkee

Track: Policy & Governance

Lawrence Krukrubo

AI Safety Researcher, University of Wolverhampton

Lawrence Krukrubo is a Researcher and Lecturer specializing in AI Safety, Causal Fairness, and Explainable AI (XAI). His work focuses on mitigating bias in Large Language Models and designing "Safe-by-Design" systems. In his recent paper, he introduced the LRR-TED framework, demonstrating that hybrid human-AI teams can achieve 94% accuracy by treating experts as "Exception Handlers." Lawrence is a Member of the London Initiative for Safe AI (LISA). At work, he mentors students on bridging the gap between theoretical fairness frameworks and robust, deployable code.

Anagha Late

Director of Strategy, BASE

Track: Security & Alignment

Anagha Late is a public-interest cybersecurity and technology policy researcher and practitioner. She specializes in AI safety evaluation, privacy engineering, and technology policy for the public sector, designing research and tools that translate technical complexity into accountability for the institutions and communities that depend on it most.

She currently serves as an AI Governance and Technology Policy Consultant working with municipalities across the nation through the GovAI Coalition, where her work spans AI procurement consulting, digital security capacity-building for politically vulnerable organizations, and privacy-centered legal analysis to build AI literacy and risk management capacity. Anagha holds a Master of Information and Cybersecurity with a Technology Policy concentration from UC Berkeley and a Bachelor’s degree in Computer Science and Human-Computer Interaction from WPI. At BASE, she serves as Director of Strategy, leading efforts to shape and scale initiatives at the intersection of community, governance, and security.

Track: Alignment