Mentors & Projects

(Spring ‘26)

BASE Mentors are pushing AI Safety, Governance, & Security forward while investing in up and coming researchers, practitioners, and leaders.

Tobi Olaiya

Tobi Olaiya is a Senior Manager of Ethical Use Policy at Salesforce, where she leads the operationalization of the company's AI acceptable use policies. She specializes in AI governance, product safety, and cross-functional policy initiatives that balance innovation with ethical technology practices.

Prior to Salesforce, Tobi held Trust & Safety roles at Twitter, where she led the development of the platform's first recommendations explainer, advancing algorithmic transparency and user choice. She holds a Master of Public Policy from the University of Maryland.

Ethical Use & Policy, Salesforce-

Summary

This research investigates the systemic gap in AI safety governance, focusing on how standardized models often fail to account for the unique socio-technical risks and cultural nuances found in diverse technological environments. The final framework proposes interoperable safety standards that integrate localized cultural values with universal safety requirements to ensure robust and inclusive governance across different contexts.

Deliverables: A playbook or template outlining practical steps for ensuring inclusive governance.

Number of Fellows: 1

-

Skills needed: Qualitative research; background in areas such as public policy, technology policy, sociology; strong analytical writing.

Time commitment: 10–15 hours per week.

Helpful backgrounds: Responsible AI frameworks, Trust & Safety, AI Governance

Alisar Mustafa

Head of AI Policy, DucoTrack: Policy & Governance

Alisar Mustafa is the Head of AI Policy at Duco, where she leads AI safety and governance initiatives for enterprise clients to ensure they operate safely, securely and responsibly. A strategic leader in AI governance, she specializes in model fine-tuning, adversarial testing, and regulatory compliance. She has held AI governance roles at Meta, the Federation of American Scientists, and the U.S. Census Bureau.

She also authors a widely read AI policy newsletter, providing insights on emerging regulations and industry developments. Her expertise spans AI risk mitigation, model evaluation, policy analysis and stakeholder engagement across enterprise and government sectors.

-

Summary

This project investigates how AI safety training datasets vary across languages. Fellows will select 2–3 languages and conduct a structured literature review to identify gaps in language coverage, harm categories, and whether datasets use translations or native-language content. The focus is on understanding what safety training resources currently exist and what's missing—not on building new datasets or testing models.

Deliverables

Public spreadsheet mapping safety resources by language, memo on key gaps and research opportunities, and potential workshop/conference submission

Number of Fellows: 2-3

-

Skills needed: Ability to critically evaluate dataset methodology (translation approaches, annotation quality, sampling, limitations) and experience conducting structured literature reviews with clear criteria and synthesis across sources.

Time commitment: 8–10 hours per week for 8 weeks.

Helpful backgrounds: Prior exposure to multilingual NLP or cross-lingual datasets is strongly preferred. Familiarity with dataset documentation standards (like datasheets for datasets, model cards, or transparency reporting) is also valuable.

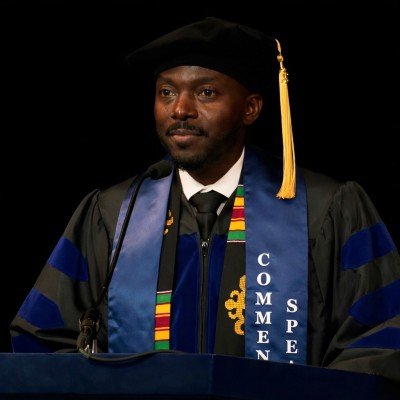

KRYstal Jackson

Non-Resident Fellow, Center for Long-Term Cybersecurity

Krystal Jackson is a Non-Resident Research Fellow at the Center for Long-Term Cybersecurity, AI Security Initiative, where she conducts research into the global security implications of artificial intelligence. Before this role, she worked as a Research Associate at the Frontier Model Forum, where she focused on advancing AI-cyber safety and AI security with industry leaders.

Krystal also previously served as an AI Capabilities Analyst at the Cybersecurity and Infrastructure Security Agency, driving critical AI initiatives within the Infrastructure Security Division. Krystal's research experience includes leadership with the Center for AI and Digital Policy Research Clinic, as a Junior AI Fellow at the Center for Security and Emerging Technology, and as a Public Interest Technology Fellow at the U.S. Census Bureau. She acts as the Research Director of BASE.

-

Summary

This project will investigate the intersection of AI governance, cybersecurity, risk modeling, and AI loss of control risks. The work may develop in multiple ways, and Fellow flexibility is a plus. We may explore how to operationalize risk models, build prototype models, examine the underlying mathematics to develop robust, defensible models, or develop novel methodologies. We may simulate loss of control scenarios and create threat models. Regardless of the specific technical tasks, fellows will likely work to translate experimental results into concrete standards and controls, as well as recommendations for government bodies.

See Bengio et al. (2026, pp. 76-83) for a description of loss of control risks.

See Jackson et al. (2026) for an example of risk modeling and threshold setting work.

Deliverables: Technical artifacts (open GitHub repo). Technical report/paper summarizing experiments and findings for arXiv and potentially journal submissions. Policy memo summarizing recommendations for standards and technical controls (various venues for this, may not ultimately be public or could be summarized in a government RFI/RFC).

-

Skills needed: Research engineering skills, familiarity with AI agent frameworks and cybersecurity evaluation, and ability to translate technical findings for policy audiences. Creativity and strong CS/math backgrounds are a plus.

Time commitment: Variable (part-time over 3-4 months within 12-month project timeline)

Helpful backgrounds: Experience with simulations and modeling (Physics/Engineering), technical AI governance (CS/Math). Or other closely related fields (information science, cybersecurity, data science, etc.)

Track: Alignment & Security

Cozmin Ududec

Science of Evaluation Lead, UK AISI

Currently leading the Science of Evaluation team at the UK AI Security Institute. He was previously Chief Scientist at Invenia Labs , doing research on electricity grids and machine learning. My background is in mathematical physics, in particular in the foundations of quantum mechanics and in quantum information theory. He has broad research experience with conceptual, pure, and applied mathematical problems.

-

Summary:

The EU AI Code of Practice defines a new model based on weight initialization, but regulators actually care about behavioral changes that emerge from weight updates during training. This project investigates how much a model's weights can change before significant performance shifts occur on benchmarks, helping clarify when updated models should require re-auditing.

Deliverables:

Academic publication on methods and findings, open source tools for perturbing model weights, possible CSET policy explainer

Number of Fellows: 2

-

Skills needed: Python programming and hands-on experience with neural networks.

Time commitment: 10–20 hours per week.

Helpful backgrounds: Deep learning theory, optimization, and adversarial machine learning.

Track: Alignment

Track: Policy & Governance

Latisha Harry

Senior Fellow, Portulans Institute

Track: Policy & Governance

Latisha is an independent research and policy consultant specializing in AI governance, digital rights, and technology-driven institutional innovation. She designs and leads complex, multi-stakeholder research programs that bridge the worlds of policy, civil society, and emerging technology.

Her work spans AI risk assessment, data governance, misinformation analysis, and legislative mapping, and has worked with organisations such as the Effective Institutions Project, OpenAI, Stanford HAI, CIVICUS, Global Witness, and the International Labour Organization, among others. Latisha’s contributions range from red-teaming advanced AI systems to analysing global surveillance trends, evaluating government responses to AI risks, building cross-national policy datasets, and producing high-impact reports for policymakers.

-

Summary

This project creates a comprehensive map of AI safety regulations across 10–15 key countries, focusing on critical safeguards like safety testing, model evaluation, supply-chain security, compute tracking, and incident reporting.

Deliverables

Fellows will help build a comparative dataset, assess regulatory maturity using a structured framework, and contribute to a report identifying the most important gaps in catastrophic risk prevention. The final output—including country profiles and cross-national analysis—will guide policymakers, researchers, and civil society on where safety measures are lacking and which interventions should be prioritized.

Number of Fellows: 2-3

-

Skills needed: Strong qualitative research and document synthesis abilities, clear writing skills, and interest in AI safety and governance.

Time commitment: 10–15 hours per week.

Helpful backgrounds: Public policy, political science, international governance, technology policy, or AI ethics/safety. Experience with comparative policy analysis is a plus but not required.

-

Summary

Evaluating AI agents on long-horizon tasks is expensive, slow, and difficult to interpret since failures can stem from many causes that produce identical pass/fail scores. This project uses controlled text-game environments to test theoretical models of long-horizon performance and identify which measurable trajectory features explain how performance scales with time-horizon and inference compute.

Deliverables:

Open-source evaluation harness for text games with trajectory logging and analysis tools, research paper comparing results across multiple models/scaffolds with recommendations for future long-horizon evaluation metrics

Number of Fellows: 3 Fellows

-

Skills needed: Experience working with agent evaluations, scaffolds, and tools like Inspect and Inspect Scout. Strong research engineering skills.

Time commitment: To be determined.

Helpful backgrounds: Familiarity with literature on METR task-horizon scaling and inference compute scaling.

Titi Akinsanmi

Global Policy Team Lead, Google

With over four years as Global Policy Team Lead at Google, I focus on shaping policies for the responsible use and access to generative AI products and hardware platforms. My team works to develop trustworthy technologies that respect individual and societal rights while addressing safety and ethical considerations in the digital space.

A dedicated advocate for responsible innovation, I bring extensive expertise in public access, government consultations, and engaging with officials to address critical issues in technology governance. My mission is to ensure that digital tools and policies empower and protect users worldwide, fostering a safer, more inclusive future in the evolving digital economy.

-

This project explores how AI privacy and data protection frameworks can be adapted to reflect African cultural values like Ubuntu and communal wealth. It bridges the gap between global standards like GDPR and local realities in the Digital South, challenging Western individualistic privacy concepts and preventing "digital colonialism" in AI governance.

Fellows will conduct comparative analysis of African data privacy legislation, create accessible educational content for everyday users, and develop policy briefs on personal data rights in African contexts.

Deliverables:

Featured blog post for the demistef.ai portal, research paper comparing African data legislation, and plain language guide to AI privacy rights for everyday users.

Number of Fellows: 2

Requirements for Fellows

Skills needed: Qualitative research, interest in AI policy/law, strong science communication skills (translating complex topics for non-experts), AI engineering and coding capabilities

Time commitment: 10–15 hours per week

Helpful backgrounds: Familiarity with GDPR, AI ethics principles, or African socio-political contexts

-

Summary:

This project identifies and documents real-world instances of algorithmic bias and unethical surveillance in African societies. It creates a knowledge base that empowers everyday users to recognize and report AI-driven harms, putting end-users back at the center of the technology lifecycle as key decision-makers and informing policy development.

Fellows will map AI deployments across Africa, conduct case studies on bias incidents, and create accessible educational materials explaining technical AI/ML concepts.

Deliverables:

Living blog post for the portal. Mapped directory of AI/ML initiatives in the Digital South. Updated "AI/ML Terms Dictionary" written in everyday language.

Number of Fellows: 2

Requirements for Fellows

Skills needed: Tech-savviness, data mapping, creative content creation (video/blogging), AI engineering and coding capabilities

Time commitment: 10–15 hours per week

Helpful backgrounds: Basics of Machine Learning, familiarity with social justice issues in tech, interest in "Human-in-the-loop" AI

Track: Policy & Governance

COLIN SHEA BLYMYER

Research Fellow, CSET

Colin Shea-Blymyer is a Research Fellow at Georgetown’s Center for Security and Emerging Technology (CSET), where he works on the CyberAI Project. His research has spanned safe reinforcement learning, formal methods, adversarial machine learning, and AI ethics. Previously, he was a graduate researcher with MITRE where he helped establish the National Institute of Standards and Technology (NIST) program on adversarial machine learning research at the

National Cybersecurity Center of Excellence (NCCOE). He holds an MS and BS in Computer

Science from Virginia Tech. He has a PhD in Computer Science and Artificial Intelligence from

Track: Alignment

Amari Cowan

Emerging Technology Fellow U.S. Census Bureau

Amari is an AI policy and governance professional working at the intersection of AI and public policy, translating technical safety, risk, and performance considerations into practical governance frameworks. Amari’s experience includes senior roles across Big Tech and the U.S. federal government, including serving as the first AI Officer at the Federal Energy Regulatory Commission and working on global technology governance initiatives at Meta and TikTok.

She currently serves as a Technologist-in-Residence, Emerging Technology Fellow at the U.S. Census Bureau, where she works on experimental policy frameworks and advises leadership across the federal landscape on ethical AI governance at scale.

-

Summary

This project explores how AI systems increasingly shape human-to-human interactions and decision-making, often in subtle but consequential ways. By analyzing concrete, real-world case studies, the research identifies measurable changes in human-to-human behavior, attitudes, and social dynamics, and evaluates how existing governance frameworks address, or fail to address, these “influence risks.”

Deliverables:

Short paper or abstract submission (to be determined pending relevance to available conferences and journals).

Draft policy memo to complement the paper as an example of governance in practice.

Optional: one public comment to a government agency of the fellow’s choice relevant to our work, to be submitted independently.

Number of Fellows: 1-2

-

Skills needed: Excellent (or strong aspirations for) academic and persuasive writing skills.

Time Commitment (Hours per week):

Helpful Backgrounds: Some knowledge of AI governance standards, policies, or regulations, relevant either globally, in the United States, the European Union, or within a region or country of the fellow’s choice.

A proficient understanding of the regulatory or legislative framework of a country or region of interest.

General interest in the intersection of AI policy and human computer interaction.

Track: Governance

Gabrielle Hibbert

Non-Resident Fellow, New America

Gabrielle Hibbert is currently the AI Policy Lead for the Commonwealth of Pennsylvania, where she writes and develops governance solutions for the Commonwealth. With her experience designing policy that is user driven and backed by industry-leading research, Gabrielle has helped establish innovative and transparency policy with the needs of risk, security, and data privacy. In 2023, she was named a non-resident fellow at New America, where she developed and published a paper on user-informed nutrition labels for generative AI tools. Her work can be found at Rubrik, the Kapor Center, and the Bipartisan Policy Center, among other outlets.

She has served as a pro bono technical expert and Board Member of the Heller School for Social Policy's Tech Policy center since 2022.

-

Summary

This project will analyze the current gaps within current AI governance frameworks. By understanding user behavior and current trends in AI use, organizations can learn to build effective AI governance frameworks beyond the top-down governance

Deliverables: (1) A published policy report with actionable policy outcomes,

(2) Submission to a conference or journal.

Number of Fellows: 2

-

Skills needed: Experience with writing literature reviews, ability to conduct user interviews, sentiment analysis, and data analysis, & experience with past research contributions, and interest in Ai governance.

Time Commitment: 8-10 hours per week

Helpful Backgrounds: User behavior, international frameworks, & sentiment analysis

Track: Policy & Governance

ELFREDAH KEVIN-ALERECHI

Chief Innovation Officer, Journotech

-

Summary:

Fellows will collaborate within NewsAssist AI and JournoTech to conduct adversarial audits of diaspora-centric AI systems, identifying vulnerabilities related to misinformation and cultural hallucination. Through hands-on stress-testing of these content synthesis tools, they will develop robust AI governance frameworks and truthfulness guardrails that protect information integrity for global users. The project culminates in actionable safety standards and ethical training modules designed to ensure reliable AI deployment across diverse socio-technical landscapes.Deliverables:

A comprehensive technical blog post that will document their methodology for stress-testing the models and the resulting safety improvements.

A set of adversarial prompt-test cases and a governance framework for NewsAssist AI.

A structured Standard Operating Procedure(SOP) for users of your platforms.

Policy White Paper

Number of Fellows: 1

-

Skills needed: Anyone interested in learning how they can solve this issue

Time Commitment: 20- 25 hours per week

Helpful Backgrounds: Diverse backgrounds, including ethics, social sciences, media studies, human-computer interaction, and public policy, who possess a foundational interest in AI literacy and a commitment to developing safer, more equitable information systems for the global diaspora.

Elfredah Kevin-Alerechi is an AI innovator, journalist, researcher, and ethical technology leader based in the UK. She is the founder of NewsAssist AI and Journotech, where she also serves as Chief Innovation Officer, leading responsible AI innovation, policy development, and ethical deployment. Her work focuses on AI safety, ethics, governance, and security, with a strong emphasis on building inclusive, human centered AI systems that serve communities rather than marginalize them.

Her background spans AI product development, AI policy drafting, governance design, and research mentorship

-

Through Journotech, she has trained over 300 professionals across 21 countries and built a network of nearly 1,000 practitioners, including educators, researchers, journalists, newsrooms, and civil society organisations. She designs and delivers training on responsible AI use, AI governance frameworks, secure AI deployment, and ethical innovation. She has also spoken at international conferences on AI security, responsible usage, and ethical AI implementation.

-

Summary

Current frontier AI models are not fully understandable or predictable. Expectations of explainability and algorithmic transparency are misguided and sometimes naive. AI systems' unpredictability challenges current policy approaches. Deep learning models like GPT-4 function as "black boxes."

Studies from Google DeepMind and OpenAI highlight gaps in mechanistic interpretability.

Amidst all of this, policy analysts and policymakers have very little to no understanding of what are the fundamental gaps and bottlenecks to us gaining a better understanding of these systems. This project aims to bridge that gap.

Goal: Communicate to policymakers the current state of our understanding of inner

workings of AI models so they understand where gaps are. This would be useful for them to calibrate for future legislation.

Deliverables:

Blog Post + Longer policy paper if feasible

Number of Fellows: 1- 2

Skills needed: A willingness to read/understand technical work. Some basic qualitative knowledge of how LLMs/ neural nets work could be nice.

Time commitment: 10-15 hours per week

Helpful backgrounds: Basic understanding of neural nets, AI Policy

-

Summary

Many countries are rushing to integrate AI systems into their public services for greater accessibility and scale. However, there are risks associated with procurement such as data storage, public profiling, facial recognition and infringement of privacy among others. This project would provide policy recommendations for guardrails on procurement of AI systems that governments could use.

Deliverables:

Blog Post + Longer policy paper if feasible

Number of Fellows: 1- 2

Requirements for Fellows

Skills needed: Research analysis, Data privacy. Data Policy

Time commitment: 10-15 hours per week

Helpful backgrounds: Digital Public Infrastructure such as M-pesa or Aadhar (but not necessary)

JEff Fields

Track: Governance

Track: Security & Governance

Senior Fellow, Berkeley Institute for Security and Governance

Jeff Fields is a mission-driven leader with nearly two decades of experience at the intersection of national security, emerging technology, and innovation. Over a 20-year career in the intelligence community, he pioneered cyber operations platforms, led the FBI’s first enterprise program for securing AI, semiconductor, and biotechnology systems, and co-established a landmark AI research partnership with UC Berkeley. He now serves as a Senior Fellow of Practice at UC Berkeley’s Goldman School of Public Policy, where he teaches and researches the geostrategic implications of frontier technologies, including AI, space, and quantum systems. Jeff advises startups, institutions, and defense-focused organizations on navigating national security risks and regulatory challenges, helping translate complex policy constraints into strategic advantages.

-

Summary:

This project investigates how digital interventions for vulnerable communities (e.g., Rohingya, Syrian, and Afghan) have evolved into high-tech laboratories for state surveillance and inadvertent biometric coercion. Fellows will research defensive protocols and governance frameworks to ensure AI aligns with equity and human rights considerations.Deliverables:

A featured blog post on the BASE website. A Design, Deliver, and Governance Scorecard to mitigate harms in developing nations.

Draft for a journal/conference submission or open-source tool.

Number of Fellows: 2

-

Skills needed: Technical proficiency in AI safety/infosec or experience in policy research and governance

Time Commitment: 10- 15 hours per week

Helpful Backgrounds: Familiarity with algorithmic bias, international data protection guidelines (e.g., ICRC Handbook), and the geopolitical risks of biometric systems

Dr. GAspard BAYe

Founder & CEO, Valix AI

Track: Security

Security AI Scientist and Ph.D. with 10+ years of experience building AI-driven offensive and defensive security solutions. I have 12+ publications in venues such as NeurIPS, HASP, and IEEE Access (140+ citations) and hold CVE recognition and multiple top cybersecurity certifications, including OSCP, PNPT, and CEH Practical. His work has been showcased at DEFCON, OWASP, BSides, and The Diana Initiative, with Hall of Fame honors from Nokia and Ford. He specializes in developing security AI algorithms, conducting penetration testing, and building intelligent threat detection systems. Currently, he founded and serves as CTO at Valix AI, where he leads the development of foundational AI security platforms that enable intelligent agents to detect, analyze, and neutralize both conventional and AI-powered threats.

-

Summary

This project investigates the security risks of deploying large language models (LLMs) in Security Operations Center (SOC) workflows, with a focus on prompt injection and adversarial manipulation. We design a systematic red-teaming framework to evaluate how on-premises LLMs behave under adversarial inputs during malware analysis tasks and develop mitigation strategies to improve robustness and reliability.

Deliverables:

Blog post, research paper draft (IEEE S&P workshop/ USENIX), open-source toolkit, adversarial prompt dataset

Number of Fellows: 2

-

Skills needed: Python, basic ML/LLM knowledge

Time commitment: 10-15 hours per week

Helpful backgrounds: Cybersecurity basics, NLP/LLMs

Serena Oduro

Policy Manager, Data & Society Institute

Serena Oduro is an AI policy expert and writer driven by her dedication to realizing an AI ecosystem that truly benefits us all. As Data & Society Research Institute’s policy manager, Serena Oduro leads the organization’s state-level policy engagement. Before her work on state policy, Serena led Data & Society’s engagement as a founding member within the US AI Safety Institute Consortium, where she advocated for a sociotechnical approach to AI safety. She is a HUMAN Residency Fellow, awarded by Ragdale, Lake Forest College, and The Mellon Foundation in support of her developing poetry collection which centers a Black feminist analysis and approach to AI.

-

Serena Oduro is an AI policy expert and writer driven by her dedication to realizing an AI ecosystem that truly benefits us all. As Data & Society Research Institute’s policy manager, Serena Oduro leads the organization’s state-level policy engagement. Before her work on state policy, Serena led Data & Society’s engagement as a founding member within the US AI Safety Institute Consortium, where she advocated for a sociotechnical approach to AI safety. She is a HUMAN Residency Fellow, awarded by Ragdale, Lake Forest College, and The Mellon Foundation in support of her developing poetry collection which centers a Black feminist analysis and approach to AI. Her work has appeared in academic journals and news media, including Politico, Internet Policy Review, Meatspace Press, and Patterns. Previously, Serena was a technology equity fellow at The Greenlining Institute, where she provided key support for Greenlining’s sponsorship of the Automated Decision Systems Accountability Act of 2021.

-

Summary:

The field of AI safety has dominated political discourse for AI assessment and evaluation over the past two years – and its dominance has included shifting away from addressing on-the-ground harms to focus on existential risks. This project aims to assess the state of AI safety evaluation practices and whether these assessment and evaluation practices are able to address AI harms Black communities face. Through this assessment, this project aims to provide policy + research recommendations to align AI safety/broader AI evaluation practices with the interests of Black communities.Deliverables:

Blogpost, Policy Brief, Conference paper

Number of Fellows: 2

-

Skills needed: Technical knowledge to analyze AI evaluation practices, understand systems of domination, ability to analyze and synthesize policy and research

Time Commitment: 5-7 hours per week

Helpful Backgrounds: AI assessment, AI evaluation, AI’s impact on Black and marginalized communities

Track: Alignment

HeramB Podar

Fellow, Center for AI and Digital Policy

Heramb Podar is an AI policy fellow at the Center for AI and Digital Policy and has previously been a GovAI winter fellow and did the ERA and FIG fellowships. Currently, he works with Encode on their International Task Force to coordinate global activity among chapters. Heramb holds Bachelor's and Master's degrees in chemistry from IIT Roorkee

Track: Policy & Governance

Lawrence Krukrubo

AI Safety Researcher, University of Wolverhampton

Lawrence Krukrubo is a Researcher and Lecturer specializing in AI Safety, Causal Fairness, and Explainable AI (XAI). His work focuses on mitigating bias in Large Language Models and designing "Safe-by-Design" systems. In his recent paper, he introduced the LRR-TED framework, demonstrating that hybrid human-AI teams can achieve 94% accuracy by treating experts as "Exception Handlers." Lawrence is a Member of the London Initiative for Safe AI (LISA). At work, he mentors students on bridging the gap between theoretical fairness frameworks and robust, deployable code.

-

Summary

This project applies mechanistic interpretability techniques to identify the specific model components (attention heads and MLP layers) responsible for sycophantic behavior. Fellows will use "Activation Patching" and "Causal Tracing" to map the flow of information in open-weights models (like Llama-3-8B) to understand how the model constructs an answer that agrees with a user's incorrect bias rather than objective truth.

Deliverables:

A "Circuit Map" diagram identifying the specific attention heads that correlate with sycophantic outputs.

A `Jupyter Notebook` demonstrating "Activation Patching" on a sycophancy dataset using `TransformerLens`.

A technical blog post explaining the internal mechanism of the behavior.

Number of Fellows: 1-2

Requirements for Fellows

Skills needed: Strong Python, familiarity with PyTorch (tensor shapes, broadcasting), basic understanding of Transformer architecture (Residual stream, Key/Query/Value vectors).

Time commitment: 10- 15 hours per week

Helpful backgrounds:Reading "A Mathematical Framework for Transformer Circuits" (Elhage et al.) or similar mechanistic interpretability literature. More relevant literature will be provided for fellows.

-

Summary

This project investigates whether training on simple, synthetic data can reduce sycophancy (the tendency of models to agree with users' incorrect beliefs) in open-source LLMs. Fellows will replicate the methodology from the 2023 DeepMind paper "Simple synthetic data reduces sycophancy in large language models”, by generating a synthetic dataset of "opinion vs. fact" claims and fine-tuning a model (e.g., Llama-3-8B) to prioritize factual accuracy over user agreement.

Deliverables:

A blog post visualising the reduction in sycophancy before and after fine-tuning.

An open-source GitHub repository containing the synthetic dataset generation script and the fine-tuning notebook.

(Optional) Evaluation on the "Sycophancy Eval" from Anthropic’s model-written evaluations.

Number of Fellows: 2 (Pair Programming/Research)

Requirements for Fellows

Skills needed:Python, PyTorch, basic familiarity with Hugging Face (transformers, peft).

Time commitment: 10- 15 hours per week

Helpful backgrounds: Completion of a basic "Intro to LLMs" course or equivalent.

Anagha Late

Director of Strategy, BASE

Track: Security & Alignment

Anagha Late is a public-interest cybersecurity and technology policy researcher and practitioner. She specializes in AI safety evaluation, privacy engineering, and technology policy for the public sector, designing research and tools that translate technical complexity into accountability for the institutions and communities that depend on it most.

She currently serves as an AI Governance and Technology Policy Consultant working with municipalities across the nation through the GovAI Coalition, where her work spans AI procurement consulting, digital security capacity-building for politically vulnerable organizations, and privacy-centered legal analysis to build AI literacy and risk management capacity. Anagha holds a Master of Information and Cybersecurity with a Technology Policy concentration from UC Berkeley and a Bachelor’s degree in Computer Science and Human-Computer Interaction from WPI. At BASE, she serves as Director of Strategy, leading efforts to shape and scale initiatives at the intersection of community, governance, and security.

-

Summary:

This project establishes the benchmark for adversarial propagation in multi-agent LLM pipelines - studying how adversarial content enters through external content an agent retrieves or processes during normal operations, crosses delegation boundaries, and produces an unsafe outcome that no individual agent step alone reveals. Existing safety benchmarks evaluate individual models in isolation, leaving the compositional failure mode of multi-agent exploit chains entirely uncharacterized. Fellows will contribute to open-source research infrastructure that the AI safety and security community can build on.Deliverables:

A labeled trajectory dataset with step-level internal state annotations across scenarios and delegation depths, probe evaluation results across model families, and analyses of where chains succeed and what structural conditions determine whether the adversarial signal remains detectable at each delegation depth. All outputs are fully documented and open-sourced.

Co-authorship on the SPEC-GAP research paper targeting a workshop or conference venue and a BASE blog post.

Number of Fellows: 2-3

-

Skills needed: Statistics, ML evaluation, Python, & mechanistic interpretability familiarity.

Time Commitment: 20 hours per week

Helpful Backgrounds: Quantitative research background (comfortable with classification metrics, confidence intervals, and significance testing). Exposure to interpretability concepts such as probing classifiers or activation analysis. Interest in AI safety and multi-agent security.

Track: Alignment